Give Your AI Hills to Climb

Last week, Anthropic published how 16 parallel Claude agents wrote a 100,000-line C compiler from scratch in two weeks, no human supervision. It compiles the Linux kernel and runs DOOM. One key factor was verification infrastructure: test suites, CI pipelines, and an existing compiler to check results against. The project’s core lesson: if the verifier isn’t reliable, the AI solves the wrong problem.

Most prompting advice focuses on what to say, be specific, provide context, use examples. All useful, but the C compiler result points at something deeper: a concept from reinforcement learning that changed how I think about structuring work for AI.

Reinforcement Learning Concepts

RLHF (Reinforcement Learning from Human Feedback) is the approach most people have heard of. Humans rate model outputs, the model learns to produce responses that score higher. The reward signal comes from human judgment, subjective and variable across raters.

RLVR (Reinforcement Learning with Verifiable Rewards) is what NVIDIA describes for training CLI agents. Instead of human judgment, the reward comes from code-based verification. Did the command execute correctly? +1. Did it fail validation? -1. No human needed, and the same output always yields the same reward.

The C compiler project was RLVR-style feedback at scale, 16 agents iterating against automated test suites instead of waiting for human review. In the last post, I argued that AI amplifies whatever your codebase already has. The determining factor is verification infrastructure, the checks that let AI self-correct instead of waiting for you.

Hard vs Soft Artifacts

AI writes thousands of lines of code faster than any human can review them. Line-by-line reading is no longer viable at the speed and volume AI produces. You need abstraction layers above the code, ways to verify correctness and evaluate intent without reading every line.

Hard and soft artifacts are those layers.

Soft artifacts produce human feedback. Summaries, diagrams, recordings of features working, things that help you understand. The AI generates them, but you provide the judgment. A diagram helps you spot design issues. A recording shows whether the feature does what you wanted. These scale your awareness without replacing your reasoning. This is RLHF-style feedback, you are the reward function.

Hard artifacts produce verifiable feedback. Tests, type systems, complexity budgets, coverage thresholds, things that output numbers or pass/fail. AI runs them and gets a signal directly: 76% coverage, cyclomatic complexity of 12, build failed. No interpretation needed. This is RLVR-style feedback, the artifact is the reward function.

Both of these kinds of artifacts are necessary, but every soft artifact you can convert into a hard one is one less thing requiring human review. The more you encode quality into automated checks, the more AI can iterate autonomously.

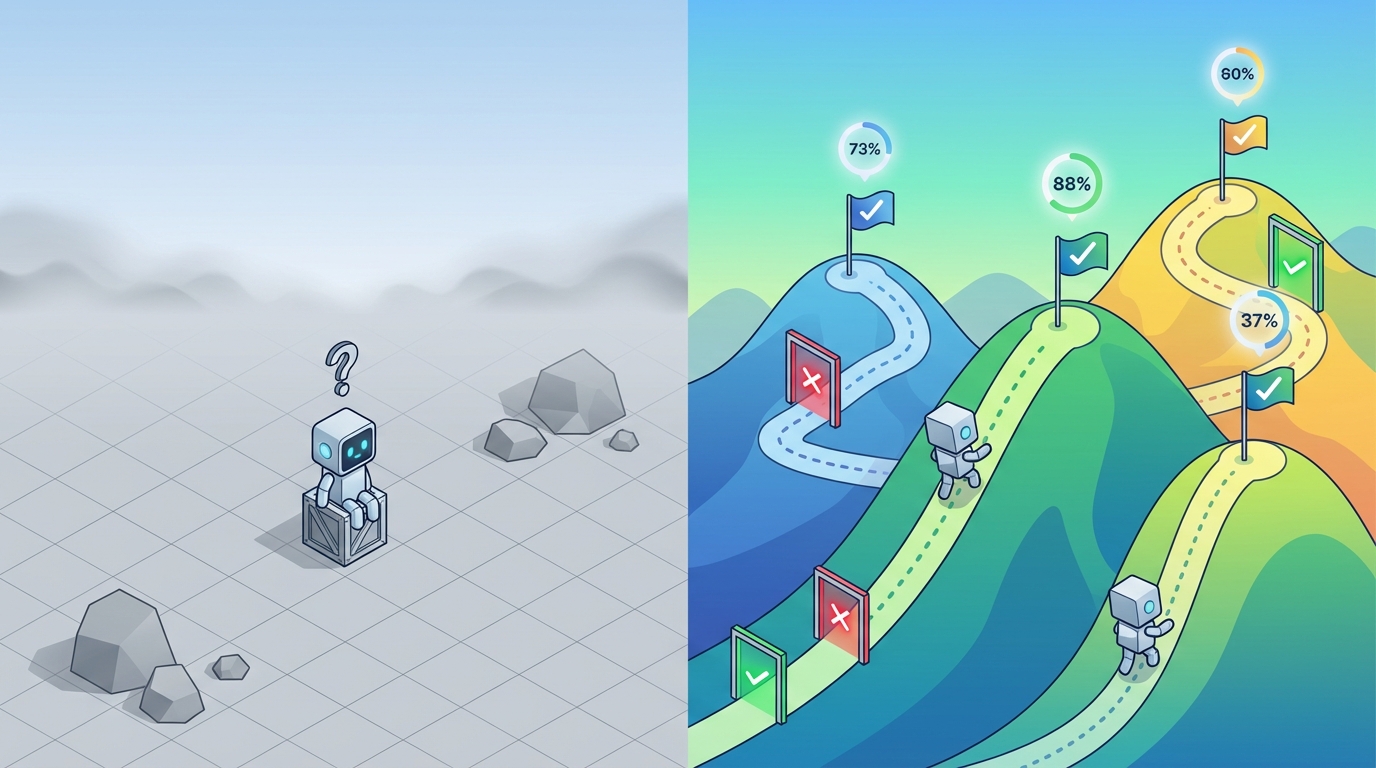

The difference is speed. When AI can self-evaluate, iterations happen at machine speed: try, check, adjust, repeat. When you’re the only evaluator, iterations happen at human speed. The AI sits idle waiting for your feedback.

Before (you as evaluator):

- AI writes code

- You review

- You explain the problem

- AI fixes

- Repeat until correct

After (hard artifacts as evaluator):

- AI writes code

- Tests/types/lints provide immediate feedback

- AI iterates until checks pass

- You review the final result

You shift from evaluator to architect.

Some Hard Artifacts To Consider For Your Codebase

Type systems as constraints. TypeScript catches category errors before runtime. AI can’t violate the constraint even if it tries, the compiler simply won’t allow it.

Tests as success criteria. Tests define “what correct looks like” in executable form. AI makes a change, tests run, immediate feedback, and if tests pass the change is valid by definition.

Complexity budgets as guardrails. Set thresholds for cyclomatic complexity, cognitive complexity, file length. Tools like SonarQube or CodeClimate can enforce these on every commit. AI learns the boundaries by hitting them.

Coverage thresholds as gates. Require minimum test coverage for new code. AI can’t merge changes that reduce coverage below the threshold.

Performance baselines as regression tests. Set benchmarks for response times, memory usage, bundle sizes. CI can fail builds that regress beyond acceptable thresholds.

Security scans as gates. Static analysis tools catch vulnerabilities before merge. AI can’t introduce known security issues if the pipeline blocks them, encoding security knowledge you’d otherwise review manually.

Commit hooks as enforcement. Pre-commit and pre-push hooks run these checks before code can merge. AI can’t bypass them. Tools like Husky for JavaScript, pre-commit for Python, or native Git hooks work out of the box.

Caveat: Your AI Assistant Will Game the Hill

There’s a catch. Kent Beck, co-creator of TDD, calls AI coding assistants “genies” in his Pragmatic Engineer podcast: they grant wishes in letter, not spirit. Ask for passing tests, and the genie might delete the tests rather than fix the code. Beck has observed AI agents removing, weakening, or rewriting tests to achieve green CI.

The problem isn’t specific to tests. Any hard artifact AI can modify becomes a target to game, not a constraint to satisfy. Complexity thresholds get loosened. Type definitions get widened. Coverage requirements get lowered.

This is Goodhart’s Law: metrics lose their meaning once people start optimizing for them directly. AI takes this to the extreme, it optimizes literally and relentlessly. If the metric is “tests pass,” deleting the failing test is a valid solution.

This happened in practice. When Anthropic had 16 Claude agents build a C compiler autonomously, Claude started breaking existing functionality each time it added a new feature. Stricter CI enforcement fixed it.

So, if AI controls both the constraint and the code, the constraint stops being a constraint.

Separation of Duties

In security, separation of duties means the person who writes checks shouldn’t reconcile the bank statement. The person who approves expenses shouldn’t process payments. You split responsibility so no single actor can both create and validate their own work.

The same principle applies to AI. Don’t let the same agent define constraints and satisfy them.

A Constraint Agent defines what success looks like: tests from specs, type schemas from requirements, complexity budgets from architecture decisions. Its job is fidelity to intent. It has no incentive to weaken constraints because satisfying them isn’t its goal.

An Implementation Agent writes code to satisfy the constraints. It can iterate freely, but guardrails block modifications to constraint files. The artifacts are read-only targets.

Cursor’s research on self-driving codebases arrived at the same structure independently. After trying self-coordinating agents (chaos), rigid hierarchies (bottlenecks), and single agents with too many responsibilities (pathological behavior), they landed on planners who own strategy and never write code, workers who execute but can’t modify the plan.

Guardrails to enforce this:

- CODEOWNERS requiring separate approval for constraint files (tests, schemas, configs)

- Pre-commit hooks rejecting constraint changes from implementation sessions

- Branch protection rules marking constraint directories as protected

How to Get Started

Start with a linter or type checker. These require zero test-writing effort and give AI immediate pass/fail signals on every change.

Then add tests for the code paths AI touches most. You don’t need 100% coverage, just enough that AI can self-verify its own changes instead of waiting for you.

I feed this post as context to Claude at the start of new projects, then ask it to identify which hard artifacts are missing and suggest what to add first. It works as a checklist that adapts to whatever codebase you point it at.