Stop Building Another Claude. Learn How to Effectively Onboard One Into Your Organization Instead.

Last year I built a custom multi-agent system. Eight specialized agents, an orchestrator, to-do lists, memory management, context window compaction, browser tools. I was proud of it. It worked.

Then Claude Code shipped task dependencies natively. Then agent teams. Then auto memory. Then the Chrome browser extension. Then a 1M context window with Claude Opus 4.6.

I was entering a race I could not possibly win.

Every enterprise team I talked to in 2024-2025 was building custom multi-agent architectures. The pitch was compelling: create specialized agents for your specific workflows, build them tailored to your domain.

But most of us aren’t in the business of building AI agent platforms. We’re in insurance, or finance, or logistics, or marketing. We have a different job to do.

Let them race. Claude Code, Cowork, Codex, Gemini CLI, Copilot: these are generic agent platforms built by companies that own the underlying models and throw billions into R&D. They’re all racing each other, shipping features daily, absorbing capabilities that took smaller teams months to build.

The agent layer commoditized

There’s been a lot of back and forth recently: “MCP is dead,” “long live MCP,” “MCP is where enterprises are actually thriving.” Here’s what the numbers say: MCP achieved near-universal adoption in 13 months, faster than HTTP or OAuth 2.0 ever did. The Agentic AI Foundation launched under the Linux Foundation in December 2025, with AWS, Anthropic, Google, Microsoft, and OpenAI as founding members. When fierce competitors agree on a shared standard, the layer above it commoditizes.

60,000+ open-source repositories adopted AGENTS.md in four months. The file’s entire purpose is telling any agent how to work in your repo, which only makes sense if agents are becoming generic and interchangeable.

Custom multi-agent frameworks gave way to standardized protocols and generic platforms. The generic agents themselves are commodity now, the same way cloud compute became commodity a decade ago.

The work is no longer building custom agents, it’s leveraging the generic ones and equipping them with your domain knowledge.

The Fortune 500 pattern is already emerging: buy generic agent platforms for the infrastructure (governance, audit trails, multi-model routing, compliance), build only the last mile ourselves: KPIs, work context, institutional knowledge, processes, and boundary definitions.

Dell’s CTO put it well: “You apply AI to processes, not to people, organizations, or companies.” The process knowledge is ours, everything else is becoming commodity.

As Pento’s year-in-review put it, “It’s not magic. It’s plumbing. But great plumbing lets you build great buildings.”

Anthropic calls it a coworker. So treat it like one.

Anthropic named their latest product Claude Cowork. They chose “coworker,” so take a hint. It’s a “digital coworker” that sits at your desktop, opens apps, navigates browsers, fills spreadsheets, you name it.

We hire capable people, we don’t build custom employees from scratch. The key part is how we onboard them. The quality of that onboarding determines whether we get a rockstar or someone who deletes the production database on their first week.

I wrote previously that LLMs are compaction tools, and you are the algorithm. That was about individuals. Your judgment shapes what the model produces. Scale that up to an enterprise and the same model becomes a completely different specialist depending on how it gets onboarded.

What to actually onboard

I’ve written before about how AI accelerates whatever you have and about giving your AI hills to climb. In practice, those two ideas break down into three layers for organizations:

- What the agent needs to see

- What the agent needs to do

- What the agent must NOT do

What the agent needs to see:

KPIs. These are the agent’s grounding mechanisms. Revenue targets, SLA metrics, conversion rates, whatever drives the business. Without them the agent can’t tell if it’s helping.

The work. Tickets in Jira, issues in GitHub, cards in whatever tracks progress. The agent needs to see what’s being worked on, what’s blocked, what’s done.

Knowledge. Notion, Confluence, SharePoint, internal wikis, documentation platforms, Slack history, etc. This is the institutional memory, the context behind what the agent sees. Without it, an agent “fixing” a setting that exists because of a 2023 regulatory requirement just created a compliance violation.

What the agent needs to do:

How our systems work, most orgs have a jungle of enterprise software, sometimes custom-built. The agent needs to know how to interact with each one.

How our processes work, business workflows, decision trees, approval chains. In most companies the knowledge for how key processes actually run lives in a handful of people’s heads, and extracting it takes real effort.

How our org is structured, who owns what, who approves what, escalation paths. An agent that can code perfectly but doesn’t know to loop in the compliance team before touching payment flows is a liability.

What the agent must NOT do:

The agent needs explicit rules: what requires human approval, what it can act on autonomously, and when to escalate. That’s the onboarding: read access, process knowledge, boundary definitions.

The progressive onboarding framework

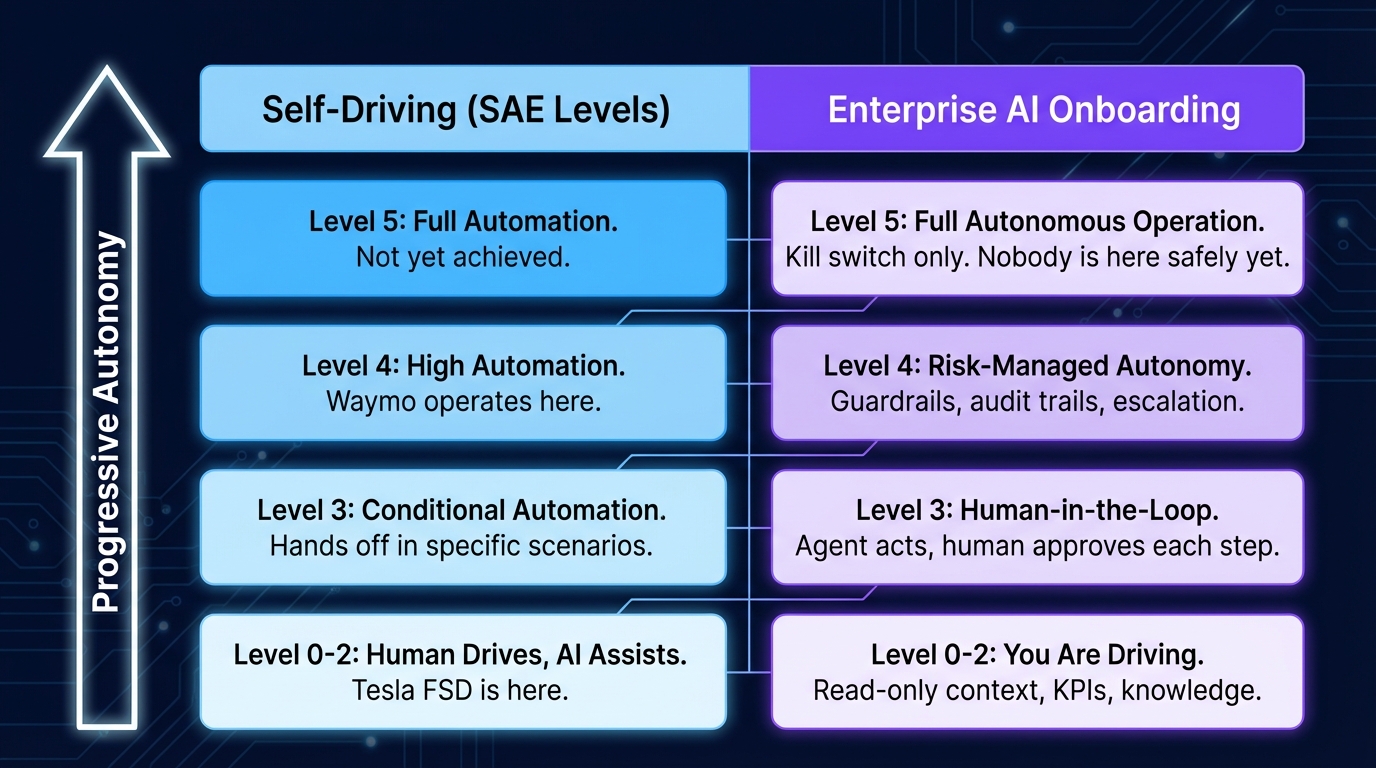

The self-driving industry mapped this out years ago with the SAE autonomy levels. Defined stages, each one adding autonomy with corresponding safety infrastructure underneath.

Level 0-2: You are driving. Give the new hire the employee handbook and read-only system access. The agent has knowledge and can look things up but can’t take action. This is our golden path, safe by design. Start here and stay here until we trust the foundation. Tesla FSD, despite the name, is classified at this level.

Level 3: Human-in-the-loop execution. The new hire can navigate our systems and take actions, with a human approving each step. Bounded autonomy with full oversight. The agent does the work, we review before anything goes live.

Level 4: Risk-managed autonomy. The agent operates within guardrails. Approval gates for high-risk actions, audit trails for everything, escalation paths to humans for edge cases. This is where Waymo operates, fully driverless but within geofenced boundaries and specific conditions. The CNCF’s four pillars of platform control (golden paths, guardrails, safety nets, manual review) give us the blueprint.

Level 5: Full autonomous operation. The agent acts proactively with a kill switch as the only control. OpenClaw showed us the shape of this. Nobody is operating here safely yet.

What happens when we skip levels

Amazon’s coding agents gave us one of the clearest examples. Kiro autonomously deleted a production environment causing a 13-hour AWS outage. Subsequent incidents involving their AI tools contributed to extended outages and, by some reports, 6.3 million lost orders. Amazon has some of the best engineers in the world and practically unlimited resources. If they can’t safely skip autonomy levels, we can’t either.

OpenClaw made this visible at a different scale. It proved full autonomy is technically possible, one developer shipping at a pace that would normally take an entire team, with autonomous agents running continuously. It also proved that autonomy without the safety infrastructure underneath produces security incidents at scale. Researchers found 135,000+ exposed instances across 82 countries, nine CVEs disclosed in four days, and 335 malicious skills distributed through its marketplace.

OpenClaw uses the popular LiteLLM PyPI package (3.4 million daily downloads) as a dependency and the LiteLLM supply chain attack showed the same pattern. Attackers compromised it, injecting credential-stealing code that exfiltrated API keys, cloud credentials, and SSH keys every time Python started, even if LiteLLM was never imported. The malicious versions were live for about three hours, but LiteLLM sits in 36% of cloud environments.

The shift

I stopped building custom agents and started building the onboarding instead: MCP servers that connect Claude to our systems, skills that encode how we do things, documentation for the decision logic that lives in people’s heads.

It’s less glamorous than building an eight-agent orchestration system but it works better than anything I built before. Watching it handle a complex internal process without help, something that used to take a new hire weeks to learn, is pretty satisfying.

The agent race belongs to Anthropic, OpenAI, Google, and Microsoft. I stopped trying to compete with them and started onboarding the agent I already have, progressively, with the same care I’d give my best new hire.